Natural language processing for humanitarian action: Opportunities, challenges, and the path toward humanitarian NLP

It has a variety of real-world applications in a number of fields, including medical research, search engines and business intelligence. Depending on the personality of the author or the speaker, their intention and emotions, they might also use different styles to express the same idea. Some of them (such as irony or sarcasm) may convey a meaning that is opposite to the literal one.

This is useful for articles and other lengthy texts where users may not want to spend time reading the entire article or document. Word processors like MS Word and Grammarly use NLP to check text for grammatical errors. They do this by looking at the context of your sentence instead of just the words themselves. Traditional calculation of ROI – gains minus cost of investment divided by cost of investment – is challenging to determine due to the fact that the data required to estimate potential variable operating costs is based on as-yet undeveloped NLP solutions. Firstly, businesses need to ensure that their data is of high quality and is properly structured for NLP analysis.

What are the challenges of chatbots in customer service?

Natural Language Processing can be applied into various areas like Machine Translation, Email Spam detection, Information Extraction, Summarization, Question Answering etc. Next, we discuss some of the areas with the relevant work done in those directions. To generate a text, we need to have a speaker or an application and a generator or a program that renders the application’s intentions into a fluent phrase relevant to the situation. NLP can be classified into two parts i.e., Natural Language Understanding and Natural Language Generation which evolves the task to understand and generate the text. The objective of this section is to discuss the Natural Language Understanding (Linguistic) (NLU) and the Natural Language Generation (NLG). False positives occur when the NLP detects a term that should be understandable but can’t be replied to properly.

It is important to consider these limitations and carefully evaluate the potential applications and usefulness of LLMs before using them for various tasks. Natural language processing (NLP) is a field of artificial intelligence that focuses on the interaction between computers and human language. In the context of search engine optimization (SEO) and search engines, NLP plays a crucial role in helping search engines understand and interpret user queries, improving the accuracy and relevance of search results. This paper explores the basics of NLP, its applications in SEO and search engines, and the role of GPT-3 and Large Language Models (LLMs) in advancing the field. Various data labeling tools are specifically designed with artificial intelligence and machine learning.

Challenge 6: Multiple Language Support

Recently, several studies have been conducted to address the two above-mentioned concerns. However, most of these studies have used complex methods or the deep knowledge of each task. For example, Bansal et al. (2014) used information from dependency trees to create TSWRs for dependency parsing (DEP). Further, Pinter et al. (2017) used additional neural architecture to address the OOV problem.

NLP can translate automatically from one language to another, which can be useful for businesses with a global customer base or for organizations working in multilingual environments. NLP programs can detect source languages as well through pretrained models and statistical methods by looking at things like word and character frequency. Virtual assistants can use several different NLP tasks like named entity recognition and sentiment analysis to improve results. While NLP systems achieve impressive performance on a wide range of tasks, there are important limitations to bear in mind. First, state-of-the-art deep learning models such as transformers require large amounts of data for pre-training.

In second model, a document is generated by choosing a set of word occurrences and arranging them in any order. This model is called multi-nomial model, in addition to the Multi-variate Bernoulli model, it also captures information on how many times a word is used in a document. Most text categorization approaches to anti-spam Email filtering have used multi variate Bernoulli model (Androutsopoulos et al., 2000) [5] [15]. In the late 1940s the term NLP wasn’t in existence, but the work regarding machine translation (MT) had started.

This resource, developed remotely through crowdsourcing and automatic text monitoring, ended up being used extensively by agencies involved in relief operations on the ground. While at the time mapping of locations required intensive manual work, current resources (e.g., state-of-the-art named entity recognition technology) would make it significantly easier to automate multiple components of this workflow. Challenges in natural language processing frequently involve speech recognition, natural-language understanding, and natural-language generation. The main challenge of NLP is the understanding and modeling of elements within a variable context.

What are the benefits of natural language processing?

CapitalOne claims that Eno is First natural language SMS chatbot from a U.S. bank that allows customers to ask questions using natural language. Customers can interact with Eno asking questions about their savings and others using a text interface. This provides a different platform than other brands that launch chatbots like Facebook Messenger and Skype. They believed that Facebook has too much access to private information of a person, which could get them into trouble with privacy laws U.S. financial institutions work under. Like Facebook Page admin can access full transcripts of the bot’s conversations.

Review article abstracts target medication therapy management in chronic disease care that were retrieved from Ovid Medline (2000–2016). Unique concepts in each abstract are extracted using Meta Map and their pair-wise co-occurrence are determined. Then the information is used to construct a network graph of concept co-occurrence that is further analyzed to identify content for the new conceptual model. Medication adherence is the most studied drug therapy problem and co-occurred with concepts related to patient-centered interventions targeting self-management. The framework requires additional refinement and evaluation to determine its relevance and applicability across a broad audience including underserved settings. Here the speaker just initiates the process doesn’t take part in the language generation.

For long-term sustainability, however, funding mechanisms suitable to supporting these cross-functional efforts will be needed. Seed-funding schemes supporting humanitarian NLP projects could be a starting point to explore the space of possibilities and develop scalable prototypes. In line with its aim of inspiring cross-functional collaborations between humanitarian practitioners and NLP experts, the paper targets a varied readership and assumes no in-depth technical knowledge. Named entity recognition is a core capability in Natural Language Processing (NLP). It’s a process of extracting named entities from unstructured text into predefined categories.

The debate over neural network complexity: Does bigger mean better? – VentureBeat

The debate over neural network complexity: Does bigger mean better?.

Posted: Tue, 28 Mar 2023 07:00:00 GMT [source]

Phonology is the part of Linguistics which refers to the systematic arrangement of sound. The term phonology comes from Ancient Greek in which the term phono means voice or sound and the suffix –logy refers to word or speech. Phonology includes semantic use of sound to encode meaning of any Human language. The use of NLP has become more prevalent in recent years as technology has advanced.

These paintings together to enable a chatbot to apprehend language, reply accurately, hold conversations, and improve through the years. From generative to retrieval-based models, a chatbot development company weighs all models to create an intelligent and interactive solution for your business. However, there are some limitations to NLP that it has some difficulties in not only adapting to different languages but also, different dialects and colloquial terms.

Using sentiment analysis, data scientists can assess comments on social media to see how their business’s brand is performing, or review notes from customer identify areas where people want the business to perform better. Businesses use massive quantities of unstructured, text-heavy data and need a way to efficiently process it. A lot of the information created online and stored in databases is natural human language, and until recently, businesses could not effectively analyze this data.

- While they have their limitations compared to machine learning techniques that can adapt based on data patterns, these algorithms still serve as an important foundation in various NLP applications.

- It is one of the main reasons chatbot development services are so high in demand.

- Still, they’re also more time-consuming to construct and evaluate their

accuracy with new data sets.

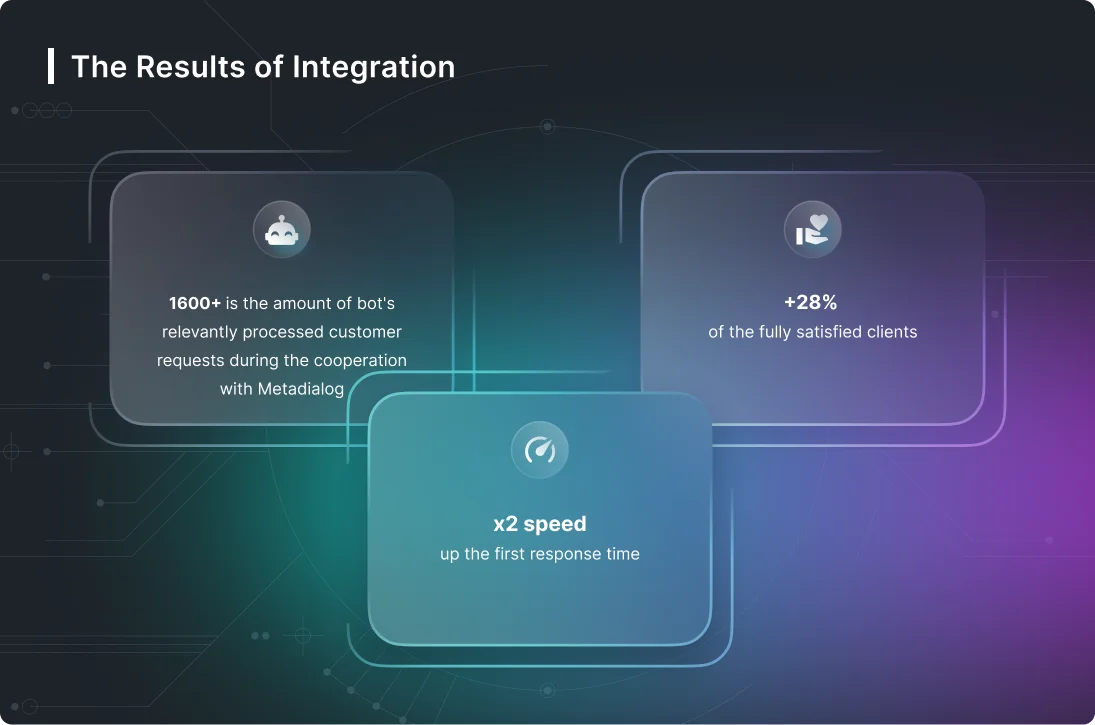

Read more about https://www.metadialog.com/ here.